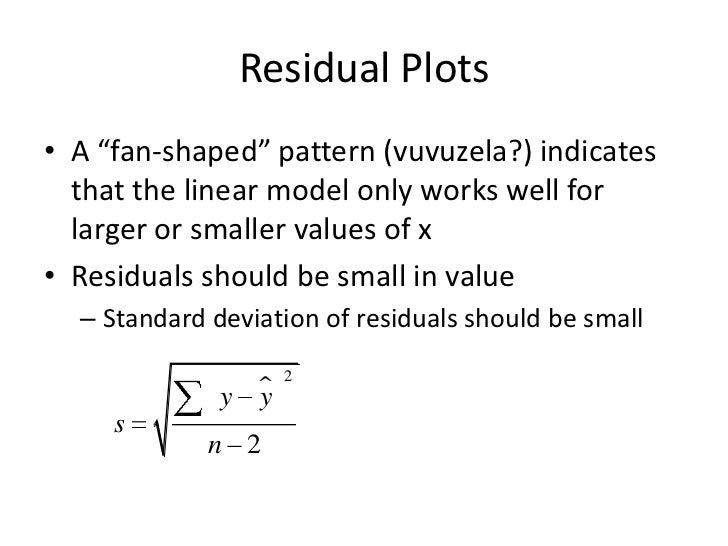

When analyzing residual plot, you should see a random pattern of points. Visual inspection of these residual plots will let you know if you have bias in your independent variables and thus are breaking either the autocorrelation or homoscedastic assumptions of regression analysis. Plt.plot(X,residuals, 'o', color='darkblue') Let’s calculate the residuals and plot them. Residuals are the difference between the dependent variable (y) and the predicted variable (y_predicted).Ī residual plot is a scatter plot of the independent variables and the residual. And, if you have multiple independent variables it doesn’t tell you anything about them. For example, your coefficients could be biased and you wouldn’t know by looking at R-Squared. In our case, our regression line is able to explain 97.25% of the variation, pretty good!īe careful though, you can’t just use R-Squared to determine how good your model is. #Returns the coefficient of determination R^2 of the prediction.

The range of R-Squared goes from 0% to 100%. In other words, it evaluates how closely y values scatter around your regression line, the closer they are to your regression line the better. #get coefficients and y interceptĪ metric you can use to quantify how much dependent variable variation your linear model explains is called R-Squared (R 2). To get the coefficients and intercept is a matter of running the following code. It’s easy to see our regression fits our input data quite well. %matplotlib inlineīelow we can clearly see there is a relationship between our independent and dependent variables. Second, create a scatter plot to visualize the relationship. X, y = make_regression(n_samples=100, n_features=1, noise=10) # generate regression datasetįrom _generator import make_regression Let’s see how we can come up with the above formula using the popular python package for machine learning, Sklearn.įirst, generate some data that we can run a linear regression on. How do we get the coefficients and intercepts you ask? This is where we will use python’s statistical packages to do the hard work for us. In which m is the slope of the line, b is the point at which the regression line intercepts the y-axis. In linear regression, the equation follows below. When performing a regression analysis, the goal is to generate an equation that explains the relationship between your independent and dependent variables. To understand more about these assumptions and how to test them using Python, read this article: Assumptions of Linear Regression with Python Linear Regression Formula There is very little or no multicollinearity.All variables follow a normal distribution.We are investigating a linear relationship.In order to correctly apply linear regression, you must meet these 5 key assumptions: Price becomes your independent variable, revenue (what you are trying to predict) is your dependent variable. The dependent variable is what you are trying to predict while your inputs become your independent variables.įor example, if we have a data set of revenue and price and we are trying to quantify what happens to revenue when we change the price.

Most commonly, it is used to explain the relationship between independent and dependent variables. Regression analysis is a widely used and powerful statistical technique to quantify the relationship between 2 or more variables. In this article, we will show you how to conduct a linear regression analysis using python. It is definitely a tool you must have in your data science arsenal. It is widely used throughout statistics and business. When embarking on a data science learning path, regression analysis is one of the first predictive algorithms that you learn. Hackdeploy Follow I enjoy building digital products and programming.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed